The MCP Connectivity Problem

The most consequential procurement question for an AI vendor selling into a hospital, a federal agency, a payment processor, or a bank is not “how good is your model.” It is “how does your product reach our data.”

The model can be excellent. The product can be production-quality. The sales cycle can be 18 months long, and at month 14 a security architect can ask that question and end the deal.

This essay is about why that happens, and what the existing toolkit looks like when you go through it option by option. The short version is: the connectivity story is the hardest part of selling AI into regulated industries, and the available connectivity options were not designed for the operator-to- many-customers shape of an AI product. The result is a category gap that is filled, today, by 6–18 months of bespoke integration work per AI vendor.

What MCP made urgent

The Model Context Protocol made an old problem newly visible. An AI assistant that can call tools is only as good as the tools it can call. Most of the useful tools are inside customer infrastructure: the customer’s CRM, the customer’s ticketing system, the customer’s clinical-data store, the customer’s ledger. The MCP server is the thing that wraps a tool for the model.

The MCP server has to run somewhere. If it runs on the AI vendor’s infrastructure, it has to reach back into the customer’s network — and the customer has to either open an inbound port (typically a non-starter) or deploy a connector the vendor designed (a custom integration the vendor has to design and the customer has to audit). If the MCP server runs on the customer’s infrastructure, the AI vendor has to ship a deployable artifact the customer will run, with all the supply-chain and operational implications that entails.

Either way, you have a connectivity problem, and the connectivity problem is the gating item on the deal.

The current options

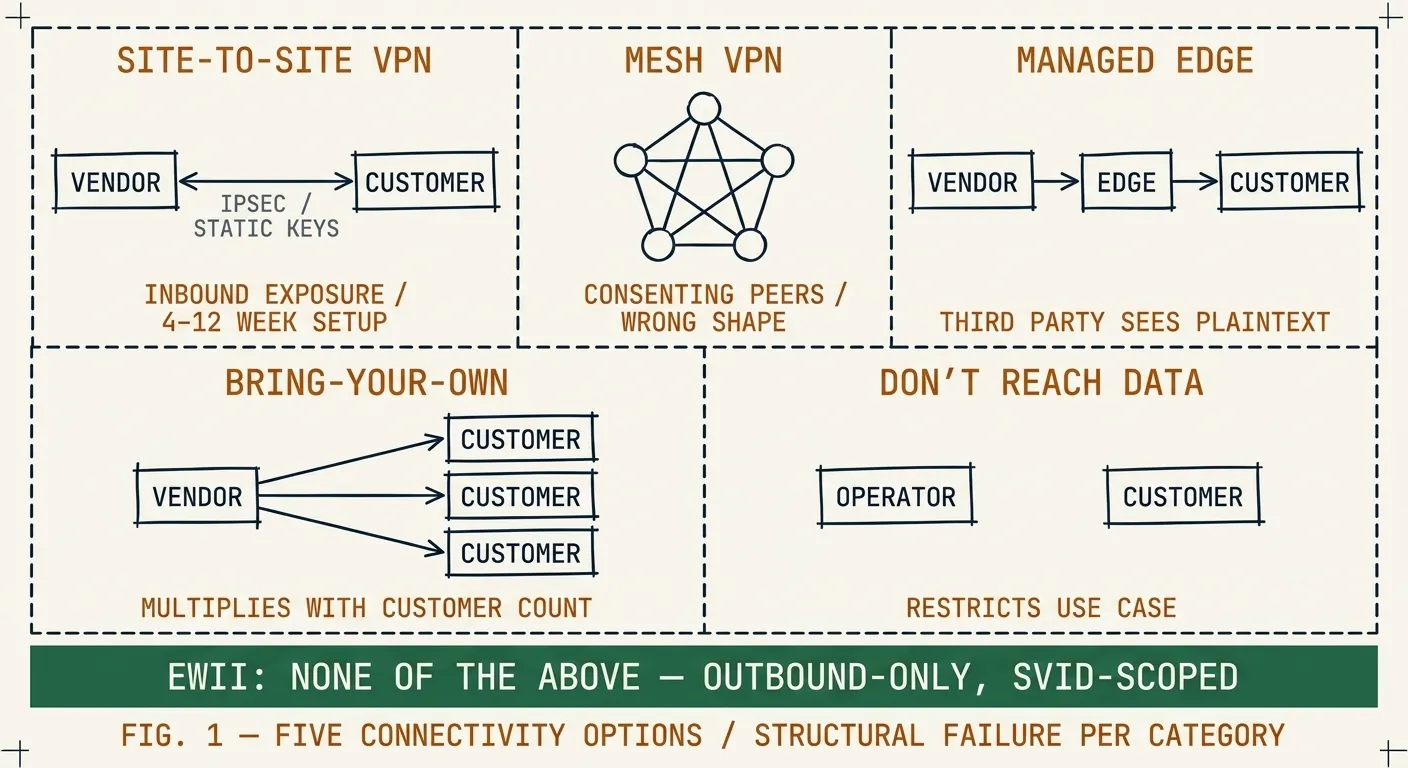

Walk into any AI-vendor-to-regulated-customer architecture review and the options on the whiteboard will be a subset of the following five.

1. Site-to-site VPN

The customer puts a VPN appliance on their network and gives the vendor a tunnel into a specific subnet. The vendor’s services on the other end of the tunnel can reach the targeted services as if they were inside.

This works. It also takes 4–12 weeks to land in a regulated environment. The customer’s network team has to coordinate with the vendor’s network team. Both sides have to agree on a tunnel topology, a credential rotation policy, and an incident-response runbook. The customer’s compliance team has to review the inbound exposure, even though it is “only” a tunnel.

The deeper problem is that S2S VPN is built around the network-as-trust- boundary model. When the vendor’s network is compromised, the tunnel is part of the customer’s exposure. Every regulated customer’s risk register grows by one entry per AI vendor they integrate this way. The customers know this. They are tired of it.

2. Private network (Tailscale, ZeroTier, etc.)

A mesh VPN puts vendor and customer nodes on the same logical network. The attack surface is smaller than a S2S VPN, the operational story is much better, and for many use cases this is the right answer.

For operator-to-customer one-way connectivity, it is still the wrong shape. Mesh networks assume consenting peers — the customer’s admin enrolls infrastructure into the vendor’s tailnet, or vice versa. The audit boundary becomes the tailnet’s audit boundary, which the customer does not control. The compliance review covers different ground than they would like.

3. Cloudflare Tunnel and similar managed-edge offerings

Outbound-only connectivity through a third-party edge. Cloudflare has built this well; the data path is sound and the operational story is good.

The structural issue is that the data path runs through the third party. For most use cases that is fine. For PHI, payment data, and Protected B, the answer “your data traverses Cloudflare in a form Cloudflare can decrypt” is not the answer the customer’s privacy officer wants to hear.

4. Bring-your-own connector

The vendor builds a custom connector for the customer environment. The customer audits and deploys it. This is what most AI vendors actually do today, because the other three options have known objections.

Each connector is a per-customer engineering project. It needs identity, encryption, key custody, audit, revocation, and an operational runbook. Multiply by N customers. The vendor’s connectivity engineering team grows as a fraction of the company. The connectors drift from each other. New customer environments take 4–8 weeks of vendor engineering time before the sales team can demo end-to-end.

5. Don’t reach the data

The vendor restricts the product to use cases where customer data does not have to be touched, or where the customer ships data to the vendor’s environment in a sanitized form. This is the “ChatGPT for the marketing team” pattern.

It works for some customers and some use cases. It does not work for clinical decision support, claims processing, fraud detection, lawful intercept, or any use case where the value of the AI product depends on operating against the production data. The customers that need those use cases are also the customers who pay the most for them.

What the customer security team actually wants

If you sit through enough security reviews, the same five questions come up. They are not the questions the AI vendor’s marketing materials usually answer. They are the questions a connectivity layer has to answer.

-

Where does our data physically traverse? Specifically: which datacenters, in which jurisdictions, operated by which entities. The procurement office wants a one-paragraph answer with two-sentence followups.

-

Who can decrypt our traffic, and where does that decryption happen? The reviewer wants to know which parties hold which keys, where each key was generated, where each key is stored, and what would have to happen for a key to be exposed.

-

What is the unit of authentication? Connection? Session? Request? The reviewer cares because revocation latency depends on it.

-

Where do the audit logs live, and can they be tampered with by your compromised admin? The audit trail is the reviewer’s last-mile defense. If it shares a trust boundary with the system being audited, it is not actually a defense.

-

What is the cryptographic isolation between us and your other customers? Logical isolation depends on every line of multi-tenant code being correct. Cryptographic isolation depends on one verification step. The reviewer prefers the latter.

A vendor who can answer these without hedging closes the security review. A vendor who hedges enters a multi-month back-and-forth that ends, often, with a conditional yes that becomes a quiet no.

What a clean connectivity layer would look like

The category gap can be described in seven properties. A connectivity layer that satisfies them is, by construction, the right answer to the five questions above. We listed the seven in /compare/vs-zero-trust-marketing. The short list:

- No implicit trust based on network location

- Per-request authentication and authorization

- Workload identity, not user identity

- Cryptographic tenant isolation

- Outbound-only at the customer edge

- End-to-end encryption with no relay-side decryption

- Tamper-evident audit trail

Building a layer that satisfies these is a 6–18 month engineering project, about half of which is the cryptographic and identity work and the other half is the operational and packaging work. We know because we did it. The result is Ewii. It is not the only product that satisfies the seven, but it is the one we built and the one we operate.

Where this goes next

If you are evaluating connectivity options for an AI product targeting regulated customers, the next two essays in this series go deeper:

- Why outbound-only beats site-to-site VPN — the architectural case for removing inbound exposure as the dominant design decision.

- Workload identity vs network identity — why “the IP I’m on” is not an identity, and what an identity bound to a workload looks like in practice.

If you are about to enter a procurement cycle and you want a head start on the security questionnaire, What a procurement-friendly architecture review looks like is a checklist version of what most reviewers ask.