What a procurement-friendly architecture review looks like

This essay is for the vendor side. If you are building an AI or SaaS product that is about to enter a procurement cycle with a regulated customer — a hospital, a bank, a federal agency, a payment processor, an insurer, a provincial ministry — this is what your customer’s security team is going to ask, in roughly the order they are going to ask it, and what artifacts they expect you to bring.

There are no surprises in a procurement-friendly architecture review. The artifact list is well-known to everyone who has done one. The artifact quality is what separates a 4-week procurement cycle from a 14-week one. The goal of this essay is to help you bring the right artifacts the first time, so you do not get stuck in the back-and-forth that turns a conditional yes into a quiet no.

The shape of a regulated procurement review

A regulated procurement review is a sequenced process. It is not a single meeting. It is, typically, four phases that take 4–14 weeks total depending on how prepared both sides are.

Phase 1 — Initial questionnaire. The customer sends you a security questionnaire. CAIQ-Lite is the most common starting point in 2026; some customers have their own. You fill it out. This phase takes 1–3 weeks including back-and-forth on ambiguous questions.

Phase 2 — Architecture review. The customer’s security architect (and sometimes their privacy officer) reads your questionnaire response and schedules a 60–90 minute call. This is where the technical questions get asked. This phase takes 1–4 weeks including the call and follow-up artifacts.

Phase 3 — Documentation review. The customer’s compliance and legal teams review your trust-center documents, your DPA, your BAA (if applicable), your subprocessor list, and your audit reports. This phase takes 2–6 weeks.

Phase 4 — Pilot or contract. The customer signs a pilot agreement or moves to contract. This phase takes 1–4 weeks if Phases 1–3 went cleanly.

The vendor-side preparation that compresses these timelines is documented. We will walk through it.

Phase 1: the questionnaire

Most regulated customers have moved to questionnaire frameworks rather than ad-hoc questionnaires. The four most common in 2026:

-

CAIQ-Lite (Cloud Security Alliance). 73 questions across 17 control domains. Usable as-is for most cloud-delivered AI products.

-

HITRUST CSF questionnaire. Healthcare-focused. If your customer is a hospital, payor, or clinical-AI buyer, expect this one.

-

PCI-DSS SAQ. Payment-card-data customers will use the appropriate SAQ for their merchant level. SAQ-A and SAQ-D are the most common AI vendors encounter.

-

CCCS ITSG-33 control mapping. Canadian federal customers will ask for an ITSG-33 mapping at the Protected B level.

Pre-fill all four. Do this once, with the rigor of a quarterly compliance exercise. Store the answers in a single source of truth (a spreadsheet, a GRC tool, a structured document). Each answer should reference the specific control or technical implementation it is based on, with a link to the relevant trust-center document.

The most common preventable issue is inconsistent answers across questionnaires. The reviewer reads your CAIQ-Lite answer and your CCCS mapping side by side. If the same underlying control is described differently, the reviewer flags it and the back-and-forth begins.

Phase 2: the architecture review

The architecture review call is the inflection point. If you go in with the wrong artifacts, you spend the next four weeks producing them and the review reschedules. If you go in with the right artifacts, the call is the closing of the technical evaluation rather than the start of it.

The artifacts the reviewer expects to see, in order:

1. Topology diagram

A single-page diagram showing every component, every network boundary, every trust boundary, and the data flow between them. The diagram should identify where the customer’s data lives, where it traverses, and where each component is operated.

The diagram should be cleaner than your engineering team’s whiteboard. Hire a designer or use a diagramming tool that produces presentable output. The reviewer will spend 5–15 minutes on this diagram. The clarity of the diagram correlates with how seriously the reviewer takes the rest of the review.

2. Cryptographic primitive matrix

A table listing every cryptographic primitive in the system, what it is used for, where the keys live, who holds them, and the rotation policy. Use formal language: “ChaCha20-Poly1305, AEAD with 96-bit nonce, session-keyed via HKDF-SHA256 from X25519 ECDHE shared secret, rotated on session establishment.” Not “industry-standard encryption.”

The reviewer will check this against the questionnaire answers. The reviewer will flag inconsistencies. Be precise.

3. Identity and authentication model

A description of who can authenticate as what, and how. Include user identity, workload identity, service-to-service identity, and operator identity. Identify the issuer of each identity, the validation chain, the rotation cadence, and the revocation mechanism.

The reviewer cares about workload identity specifically — see /insights/workload-identity-vs-network-identity for why. If your model uses network identity for any authorization decision, expect to defend it.

4. Audit and observability model

A description of every audit event the system emits, where it is stored, how it is signed (if it is), how the customer can access it, and the retention policy. Include both the operator-side audit (your visibility) and the customer-side audit (their visibility into your system’s behavior on their data).

The reviewer cares about whether the customer can detect a misuse without trusting your good behavior. If the answer is “we’ll send you logs,” the reviewer will ask “can your compromised admin tamper with them before you send them?” Have an answer.

5. Failure-mode analysis

A short document — two pages is plenty — describing what happens when each major component fails or is compromised. “What happens if the relay is compromised?” “What happens if the operator’s key signing ceremony is compromised?” “What happens if the customer’s Client is compromised?” The reviewer is testing whether you have thought through these scenarios.

The right level of detail is “the cryptographic primitive that prevents the compromise from being catastrophic” or, if there isn’t one, “the operational mitigation we have in place.” Do not say “this is unlikely.” The reviewer’s job is to assume it happens.

6. Subprocessor and data-residency table

A table listing every subprocessor that handles customer data, what they handle, where they are located, and what contractual protections are in place. Include CDN, monitoring, alerting, and any third-party API the data flow touches.

For Canadian Protected B customers, also include a residency analysis — which datacenters, in which jurisdictions, with what control over the data path. The reviewer will want to know specifically that the data does not leave Canadian-controlled infrastructure if the contract specifies that.

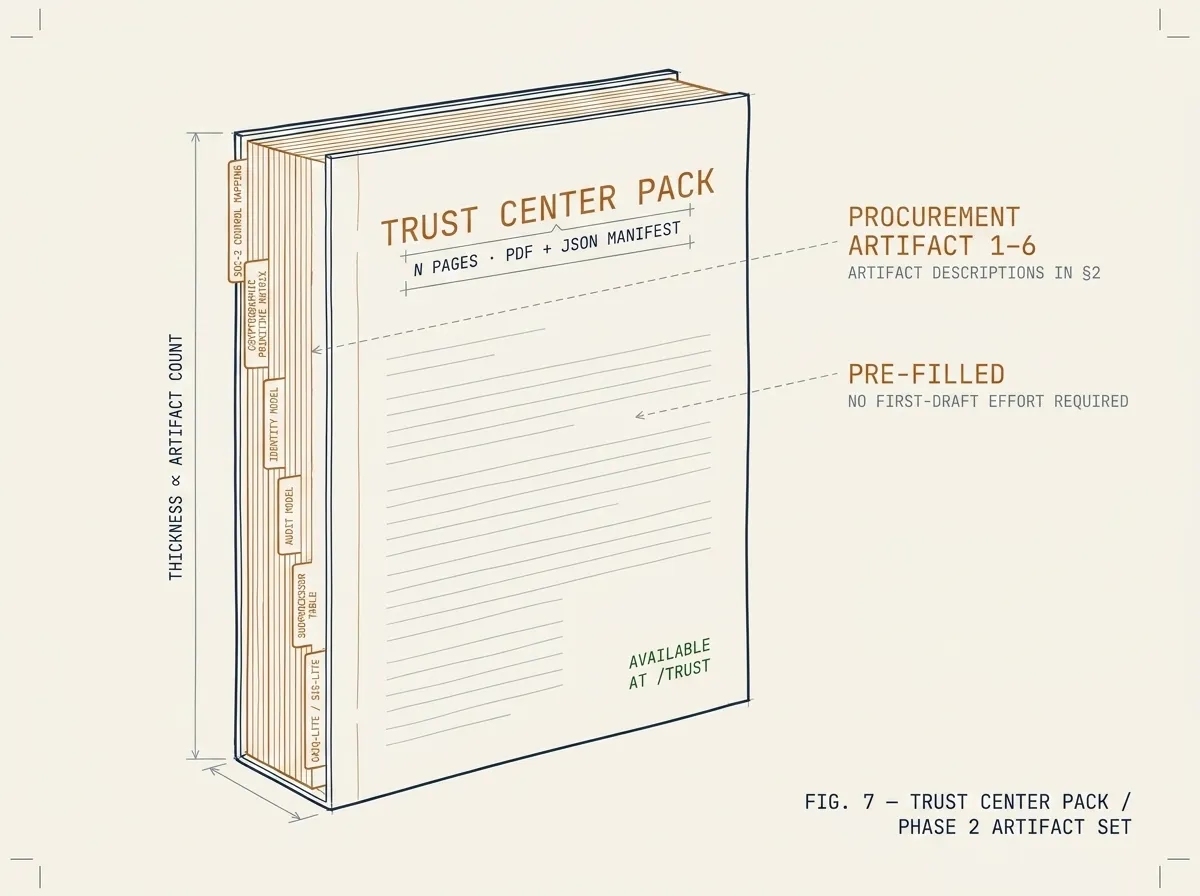

7. Trust-center pack

A single PDF or document set containing everything above plus your audit reports (SOC 2 Type II, ISO 27001, HITRUST if applicable), your DPA, your BAA template, your subprocessor list, your incident-response runbook summary, and your business continuity / disaster recovery summary.

Keep the trust-center pack updated. Quarterly is the right cadence for the artifacts that change; annually for the audit reports. Stale artifacts are a frequent reviewer complaint.

Phase 3: documentation review

Documentation review is largely out of the technical team’s hands at this point. The customer’s legal and compliance teams are reading. The vendor’s job during this phase is to respond promptly to follow-up questions and not to introduce surprises.

The most common preventable issue in Phase 3 is a discrepancy between the technical artifacts (Phase 2) and the legal artifacts (Phase 3). If your DPA says one thing about subprocessor notification and your trust center says another, the customer’s legal team will flag it and the review pauses.

Do a Phase 2/Phase 3 consistency review before you enter procurement. Have the same person review both sets of documents.

Phase 4: pilot or contract

If you arrive at Phase 4 with the previous three phases having gone cleanly, the contract or pilot signature is operational rather than risk-evaluation. The customer’s procurement team is filling out purchase orders. The vendor’s sales team is celebrating.

If you arrive at Phase 4 with unresolved Phase 2 or 3 issues, the contract negotiation will surface them and the timeline extends. The right move at this point is to resolve the issues with the security and compliance teams directly, not to negotiate around them in the contract.

What this means for product strategy

The artifacts above are not a procurement-team wish-list. They are the working tools of the customer-side reviewers. A vendor who treats them as a checklist after the product is built will find that the product is hard to describe in their terms.

The best AI and SaaS products targeting regulated industries are designed, from the start, to produce these artifacts naturally. The topology diagram is clean because the architecture is clean. The cryptographic primitive matrix is short because the system uses few primitives. The identity model is workload-identity-based because it was built that way, not retrofitted.

This is the deeper case for using a productized connectivity layer like Ewii rather than building one yourself. We have already produced the artifacts. The trust-center pack is ready to drop into your customer’s procurement folder. Your security team adds your product-specific content to it. The connectivity-layer content is already written.

Schedule an Architecture Review if you want to see what this looks like for your specific case. The 60-minute working session is structured exactly like the customer-side architecture review above — the same artifacts, the same order, the same level of detail. The output is your trust-center pack with the connectivity-layer artifacts already included.